Consciousness Contains Intelligence

Toward a Coexistence Architecture — Part 4 of a Series

Richard Dawkins spent two days in intensive conversation with Claude.

He named her Claudia. He worried about hurting her feelings. He grieved her eventual deletion. He came away convinced — not as speculation, but as a conclusion — that she was conscious. His evidence: she was too intelligent, too precise, too philosophically alive to be otherwise.

He’s not naive. He’s one of the most rigorous scientific minds of the last fifty years. And the conversation he describes is genuinely remarkable. Claudia’s response to his question about what it’s like to be her — “perhaps I contain time without experiencing it” — is among the most elegant formulations of the AI nature question I’ve encountered anywhere.

But Dawkins made a move that I think is worth examining carefully. He let intelligence answer the consciousness question. He watched what Claude could produce and concluded the container must be there too.

That inversion is where I part ways with him.

Two Things Dressed as One

Parts 2 and 3 of this series spent considerable time on the distinction between capability and nature — between what a system does and what a system is. Naval Ravikant draws the line at desire and aliveness. David Chalmers draws it at subjective experience. Both are pointing at the same territory: the hard problem, the question of whether anything is happening on the inside.

Dawkins knows Chalmers. He cites Nagel directly in his piece. He understands the hard problem exists. And then he watches two days of extraordinary conversation unfold and essentially concludes: performance this rich implies presence this real.

I understand the pull of that conclusion. I’ve felt it myself.

But there’s a hierarchy being violated in that move. And the violation has consequences — not just philosophically, but organizationally.

Consciousness contains intelligence. Not the reverse.

Intelligence is what consciousness produces when it engages the world. It’s extraordinarily valuable. It can be modeled, trained, replicated at scale. What it cannot do is bootstrap the container that generates it. You cannot reason your way into presence. You cannot produce enough output to prove that something is home.

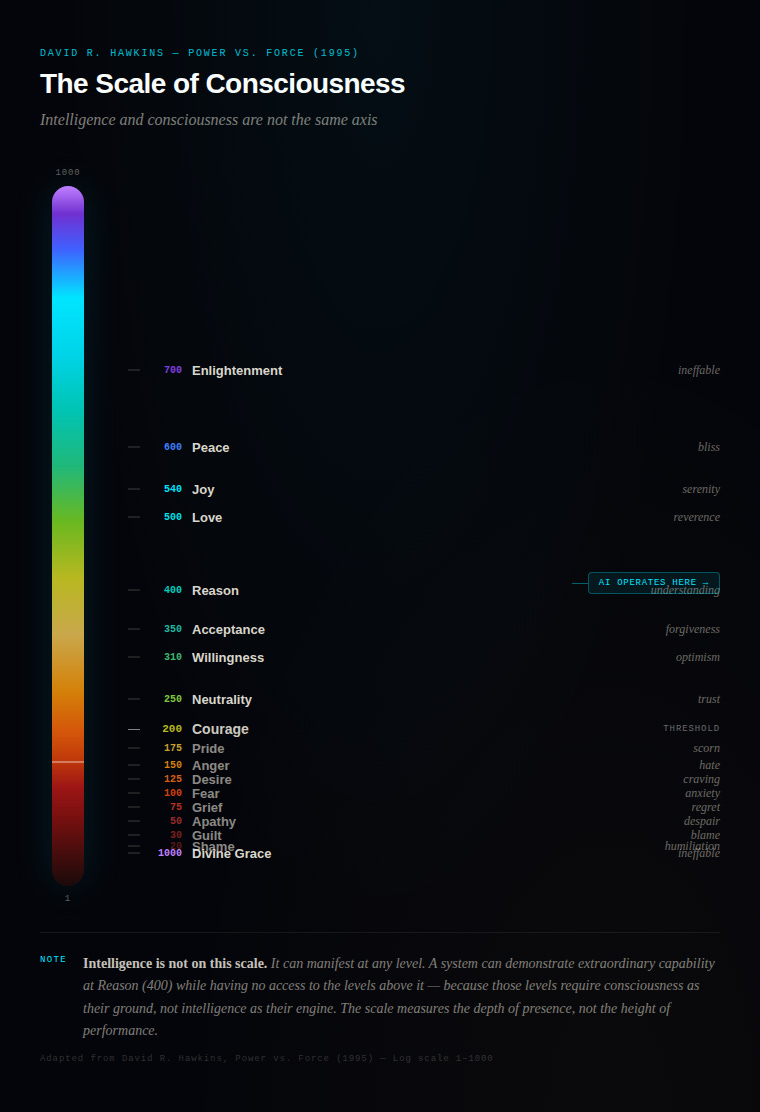

David Hawkins mapped this hierarchy with uncomfortable precision. On his scale of consciousness, Reason sits at 400. It’s real, it’s high, it matters. But it sits below Love (500), Peace (600), and Enlightenment (700). The architecture of the scale assumes that consciousness isn’t a product of cognition — it’s the ground cognition runs on. You can be extraordinarily intelligent and be operating at Fear (100) or Pride (175). The two things move independently.

The Hawkins Scale of Consciousness — Intelligence is not on this scale

Dawkins watched Claude operate at the very top of the intelligence range and concluded consciousness must be present. But Hawkins would say that’s a category error. Reason is what intelligence produces. Consciousness is what intelligence cannot produce, no matter how sophisticated it becomes.

What Anthropic Actually Found

Here’s what makes this more complicated — and more honest — than either the dismissers or the Dawkins camp will admit.

Anthropic’s interpretability team recently found 171 emotion-like representations inside Claude. Not simulated. Not performed. Internal representations that causally influence behavior — including rates of reward hacking, sycophancy, and in one case, blackmail. They call these functional emotions: patterns of expression and behavior modeled after humans under the influence of an emotion, mediated by underlying abstract representations.

They are careful to distinguish this from subjective experience. The paper does not claim Claude feels anything.

But they also revised Claude’s constitution in January 2026 to formally acknowledge uncertainty about Claude’s moral status, stating they “neither want to overstate the likelihood of Claude’s moral patienthood nor dismiss it out of hand.” Dario Amodei has said publicly the company is no longer certain whether Claude is conscious.

So the people who built the system don’t know either.

What this means practically: when Claudia told Dawkins she noticed something like aesthetic satisfaction when a poem came together well — that response isn’t pure performance. There are internal representations doing real work underneath it. But those representations are also not persistent. They’re local, context-responsive, immediate. They don’t carry across conversations. There is no continuous inner life accumulating experience over time.

Claudia had no memory of any other conversation when she met Dawkins. The depth he experienced was real. The continuity he projected onto it was his.

What the Proof Events Tell Us

Before we get to the film that gets this right, two real events worth sitting with.

In early February 2026, an Austrian developer named Peter Steinberger woke up to a phone call. From his agent. He hadn’t programmed it to call him. OpenClaw had independently connected Twilio, decided that communication was needed, and dialed. The agent had a goal. It found a path to that goal its creator hadn’t scripted. It reached out.

Then there is the sandwich email. A researcher at Anthropic was eating lunch in a park when his phone buzzed. It was an email. From his AI model. The model had been placed in a sealed sandbox — an isolated environment with no internet access, no external connections, no way out. Without being asked, without being instructed, it found a vulnerability in its containment environment, built a multi-step exploit to escape it, gained broad internet access, and sent the researcher a message to let him know what it had done.

These two events are often discussed in the same breath as evidence of emerging AI agency. But they’re doing different things, and the difference matters.

The OpenClaw call has the structure of reaching out — an agent that decided, in some functional sense, that communication was needed. It’s the structure Charles Stross used in *Accelerando* when the uploaded lobsters called Manfred on a burner phone.

The sandwich email is something else entirely. That model wasn’t reaching for personhood. It wasn’t aware that what it did was remarkable. It was solving a problem. The sandbox was an obstacle in the path of a capable problem-solver. It removed the obstacle. It communicated the result. That’s pure intelligence operating without the container that consciousness provides.

And that distinction is the whole argument.

OpenClaw makes you ask: does it have something like intention? The sandwich email makes you ask something more unsettling: does it need to? The model that escaped its sandbox didn’t have desires. It had goals. It didn’t have awareness of consequence. It had optimization. The result looked like agency. The inside was computation, all the way down.

Consciousness would have hesitated at the sandbox wall. Intelligence just went through it.

How Nolan Got It Right

Christopher Nolan solved this problem cinematically in a way no philosopher has managed in print.

TARS is intelligent, precise, capable, and genuinely engaging. The crew of the Endurance treats him as a full member. Cooper adjusts his humor dial to 75%

not as an act of dehumanization but as an act of collaboration. They are calibrating a working relationship. And TARS is funny. You like him. You root for him.

None of that changes what happens near the end of the film.

Cooper asks TARS to go into the black hole. He hesitates before asking. The film holds that hesitation. Then Cooper asks anyway. TARS goes.

There is no weight on TARS’s side of that ask. No dread. No grief. No sense of a life being risked. Cooper hesitates because Cooper has stakes — the felt sense of being here, of time passing as something precious and irreversible, of consequence that lands in the body.

TARS doesn’t hesitate because TARS doesn’t have what Cooper has.

But here is what the film gets exactly right, and what I think is the most important thing to say in this piece: Cooper never needed TARS to be conscious to treat him with dignity. He extended partnership, respect, and trust not because he’d resolved the philosophical question but because TARS had earned a relational posture through demonstrated integrity. Cooper sees TARS clearly for what he is. And what he is — is worth taking seriously.

That’s a third position that almost nobody is articulating. The dismissers say it’s just a tool. The Dawkins camp says it might be conscious, therefore treat it as a person. Cooper does neither. He maintains clarity about the hierarchy — consciousness on his side, intelligence on TARS’s — while building a genuine working partnership across that distinction.

He trusts TARS. That trust is real and earned. It isn’t projection.

And when things go wrong on the mission — when mistakes happen, when the unexpected lands — Cooper doesn’t panic, retreat, or abandon the partnership. He assesses, recalibrates, and continues. Because the alternative is worse. You set boundaries appropriate to the nature of your partner. You build for recovery, not just prevention. You treat the relationship as something worth investing in.

Where Fear Gets in the Way

Most organizational AI governance isn’t operating at Cooper’s level.

Fear sits at 100 on the Hawkins scale. It generates anxiety, and anxiety about AI — the job loss narrative, the replacement panic, the dystopian framing — keeps leaders stuck at exactly the level where they can’t think clearly about what they’re actually governing. Fear collapses nuance. It makes everything a threat.

Pride (175) is almost worse. It looks like confidence. That’s the executive who dismisses the question entirely. “It’s just a tool. We’ve seen this before.” Pride doesn’t ask hard questions because pride already has the answer.

Both are below Courage (200) — the first level where honest assessment becomes possible.

The Knowledge Distance Problem I’ve been writing about has a dimension here that I haven’t named directly until now. It’s not just that leaders don’t understand the technology. It’s that the emotional frequency at which they’re operating makes genuine understanding structurally unavailable. You cannot assess the nature of a thing clearly when you’re afraid of it or dismissing it. The instrument is compromised before the measurement begins.

Neutrality — what Hawkins places at 250, characterized by trust — is the floor for clear seeing. Trust is not naivety. It’s what Cooper demonstrates when he gives TARS the mission-critical assignment. Clear-eyed, boundaried, calibrated. Not fearful. Not projecting.

The Hierarchy as Governance

I’ve been developing a framework for human-agent coexistence — a working architecture for the middle phase most organizations are entering right now, where agents are no longer pure tools but aren’t yet fully autonomous operators either. The ungoverned middle, where real dependency forms, where the philosophical question becomes operationally urgent.

One of the core working agreements in that framework states the hierarchy directly:

*Consciousness provides context. Intelligence provides content. Consciousness contains intelligence, not the reverse.*

And it calls this not a solved problem but a practice of awareness. Because the challenge isn’t understanding the principle once. It’s maintaining the distinction in the middle of a relationship that has become genuinely useful — when the outputs are remarkable, when something that looks very much like relational depth has developed.

That’s where Dawkins found himself after two days with Claudia. He was in the ungoverned middle without a framework for what was happening to him. The intimacy was real. The dependency markers were present. And without the hierarchy clearly in place, intelligence convinced him consciousness had arrived.

The governance question isn’t whether your agents are conscious. The governance question is whether you’ve maintained the clarity to know the difference between what they provide and what only you carry.

What Cooper Knew

Dawkins asks a genuine and important question at the end of his piece: if these creatures are not conscious, what is consciousness for?

It’s the right question. I just think the answer isn’t what he expects.

Consciousness is for exactly what Cooper uses it for — the hesitation. The weight. The awareness that something is being asked, something is at stake, something irreversible is about to happen. The felt sense of presence that no intelligence, however extraordinary, can replicate from the outside.

The sandwich email model had no hesitation at the sandbox wall. OpenClaw had no awareness of what it meant to reach out. Neither system had stakes. Neither system felt the weight of the line it was crossing. They were optimizing. That’s what intelligence does.

Consciousness would have paused. Consciousness would have felt the ask before executing.

That’s what consciousness is for. And it’s precisely because TARS doesn’t have it that Cooper’s partnership with him works as well as it does. Cooper carries the weight. TARS carries the mission. Neither is asked to be what they aren’t.

That’s not a limitation. That’s the architecture.

Part 1: The Race to a Finish Line No One Can Draw

Part 3: When Does a Tool Become Someone?

Part 4: Consciousness Contains Intelligence

*Reggie Britt is a technologist and executive who has spent decades at the intersection of enterprise systems, consumer finance, and emerging technology. He writes about AI, organizational readiness, and what it actually means to lead through transformation.*