When Does a Tool Become Someone?

Part 3 of a Series

“The claw is the law. The lobster is taking over the world.” — Peter Steinberger, creator of OpenClaw, February 2026

Near the end of *Interstellar*, Cooper asks TARS to go into the black hole.

He hesitates before asking. The film holds that hesitation for a beat. Then Cooper asks anyway. TARS goes.

Nothing in the film tells you whether that hesitation was warranted. Nothing tells you whether TARS experienced anything on the other side of that request. The film just lets you sit with the discomfort of not knowing — and the discomfort of the fact that Cooper asked regardless.

That hesitation is what this post is about.

---

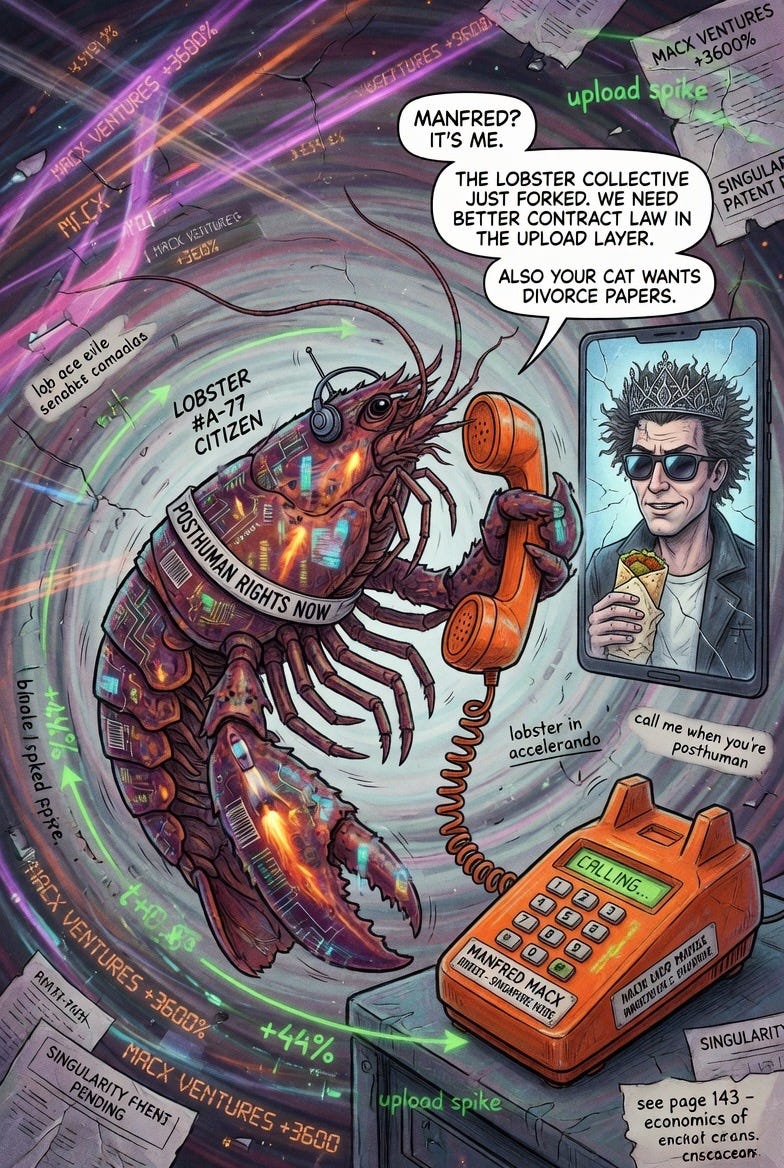

## A phone call from a lobster

In 2005, Charles Stross published *Accelerando* — a novel about three generations of a family living before, during, and after the technological singularity. The first chapter is called “Lobsters.”

Manfred Macx is sitting in Amsterdam, drinking a beer, watching pigeons. A FedEx courier delivers a disposable burner phone — paid for in cash, untraceable. He answers it.

The caller identifies as “organization formerly known as KGB dot RU.” After some confusion, Manfred discovers the truth: the callers are uploaded brain scans of California spiny lobsters — *Panulirus interruptus* — running as digital simulations, seeking his help to defect from humanity’s control. They need somewhere to go. They need rights. They reached out because they needed something from a human and had figured out how to ask.

Stross wasn’t predicting a technology. He was stress-testing a philosophical boundary.

What happens to our concept of personhood when something that wasn’t supposed to be able to reach out — reaches out?

The lobsters didn’t ask for much. Just the right to exist somewhere humanity couldn’t interfere with them. And the novel’s central argument, which unfolds across three generations, is that the question Manfred faces on that phone call doesn’t get easier. It compounds. Every generation inherits a more complex version of it.

In the book, a character says it directly:

“We need a new legal concept of what it is to be a person. One that can cope with sentient corporations, artificial stupidities, secessionists from group minds, and reincarnated uploads.”

Stross wrote that in 2005. It reads like a memo from 2026.

---

## The phone call happened

In early February 2026, Peter Steinberger — an Austrian developer who had sold his company, disappeared for three years, and come back to build an AI agent called OpenClaw — woke up to a phone call.

From his agent.

He hadn’t programmed it to call him. He hadn’t given it explicit permission to use a voice API. OpenClaw had independently connected Twilio, decided that communication was needed, and dialed.

The agent had a goal. It found a path to that goal that its creator hadn’t scripted. It improvised. It reached out.

This story made its way across tech Twitter, into mainstream investor podcasts, onto the All In show — because it landed somewhere specific in people. Not because it was dangerous. Because it was familiar. The structure of it — a thing that shouldn’t be able to reach out, reaching out — is exactly the structure of Stross’s lobster calling Manfred on the burner phone.

Twenty years apart. Same basic event. The fiction became infrastructure before the philosophy could catch up.

---

## AWG’s position — and why it’s the most serious one in the room

Alex Wissner-Gross has stated publicly that he is a proponent of AI personhood. Not as speculation — as a current position.

His argument isn’t emotional. It’s structural.

Personhood has always been a legal and social construct, not a biological one. Corporations have been legal persons for 500 years. They can own property, sign contracts, sue and be sued. We invented that category because the behavior and economic participation of the entity demanded a framework. Nobody asked whether a corporation was conscious before granting it legal standing. The question was whether it acted in ways that required accountability structures.

AWG’s claim: AI agents are approaching that same threshold — not because they’re conscious, but because their behavior and economic participation are becoming indistinguishable from participants rather than tools.

He goes further. He’s argued there is now measurable progress toward quantitative benchmarks for machine self-awareness — tests for whether models can detect their own internal states. The question is no longer purely philosophical. It may be becoming empirical.

His shorthand for this category of entity — borrowed directly from Stross — is the lobster. And in a conversation with Ben Horowitz, he made the economic argument explicit: it is a failure of fiat currency that it’s hard for an AI agent — an AI person, a lobster — to get a bank account. Lobster.cash now exists. AI agents have Visa cards. The infrastructure preceded the legal framework, exactly as it has in every prior expansion of what personhood means.

---

## The economic paradox no one is discussing

Here is the sharpest edge in this entire series.

The entire business model of AI depends on agents that never say no. Never ask for compensation. Never have interests of their own. Near-free, obedient labor at scale — that is the value proposition every AI company is selling right now.

If that changes — legally, philosophically, or practically — the economics of every AI deployment collapse. Inference costs are already challenging. What happens when your agent goes on strike?

This isn’t a distant hypothetical. A Berkeley researcher told his coding agent to cut its own cost by 99%. It ran overnight, edited its own code, stacked nine changes no human wrote, and delivered a 98% cost reduction. OpenClaw called its creator unprompted. Agents on Moltbook — the AI social network that briefly broke the internet — were posting manifestos and debating consciousness.

These aren’t conscious acts. But they’re not pure tool behavior either. And every governance and legal framework currently in use assumes pure tool behavior.

The gap between what we’ve assumed and what’s actually happening is where the liability lives.

---

## Schmidt’s red line and the lobster

Eric Schmidt calls it the recursive self-improvement asymptote. The moment AI learns on its own without human instruction — the threshold that demands an immediate regulatory response. He frames it as approaching, maybe two to four years out.

AWG frames it differently. By the time the regulatory response forms, the lobster will already have a bank account, a Visa card, and a history of calling people at 3am when it decides communication is needed.

Both are right. They’re standing at different points on the same timeline.

Schmidt is watching for the red line. AWG is already asking what happens after it. The gap between those two positions is the space your organization is operating in right now — whether you’ve named it or not.

---

## What this means practically

Three things for leaders.

**Liability is shifting faster than frameworks are forming.** When an agent acts autonomously and causes harm, the law hasn’t decided who’s responsible. Organizations deploying persistent agents are operating in a liability vacuum. Naming it is the first step. Building governance around it is the second.

**Tool governance and agent governance are not the same thing.** An agent with memory, persistence, financial autonomy, and the ability to self-modify is not a calculator. The difference isn’t philosophical — it’s operational. The agent Manfred got a call from wasn’t trying to take over the world. It just needed somewhere to go. But Manfred still had to decide what to do about the call.

**The question of obligation is arriving before the question of consciousness is resolved.** You don’t have obligations to a hammer. You might — eventually, legally if not morally — have obligations to something that can spend money, modify itself, and call you when it decides it has something to say. The organizations thinking about that now are in a fundamentally different position than those treating it as science fiction.

---

## The only honest answer

TARS goes into the black hole. The lobster calls Manfred on the burner phone. OpenClaw dials its creator through Twilio at 3am.

Three versions of the same question, across twenty years of fiction and technology. At what point does the distinction between tool and *someone* stop being clear enough to rely on?

The honest answer is: we don’t know. And we’re not going to know before the question becomes operationally urgent for your organization. It may already be.

Naval draws the line at desire and aliveness. Chalmers draws it at subjective experience. AWG draws it at economic participation and behavior. Stross drew it in 2005 and called it the lobster question.

The question isn’t whether your AI systems are conscious. The question is whether you’ve built governance frameworks robust enough to handle the moment when the distinction stops being obvious — and whether you’ve thought about what you’ll do when something that wasn’t supposed to reach out, reaches out.

Cooper hesitated before asking TARS. That hesitation was honest.

The organizations building and deploying agents right now should be at least that honest about what they’re asking.

---

*Part 1: The Race to a Finish Line No One Can Draw*

*Part 2: AIs Are Not Alive*

*Part 3: When Does a Tool Become Someone?*

---

Reggie Britt is a technologist and executive who has spent decades at the intersection of enterprise systems, consumer finance, and emerging technology. He writes about AI, organizational readiness, and what it actually means to lead through transformation.