The Race to a Finish Line No One Can Draw

The conversation about AGI

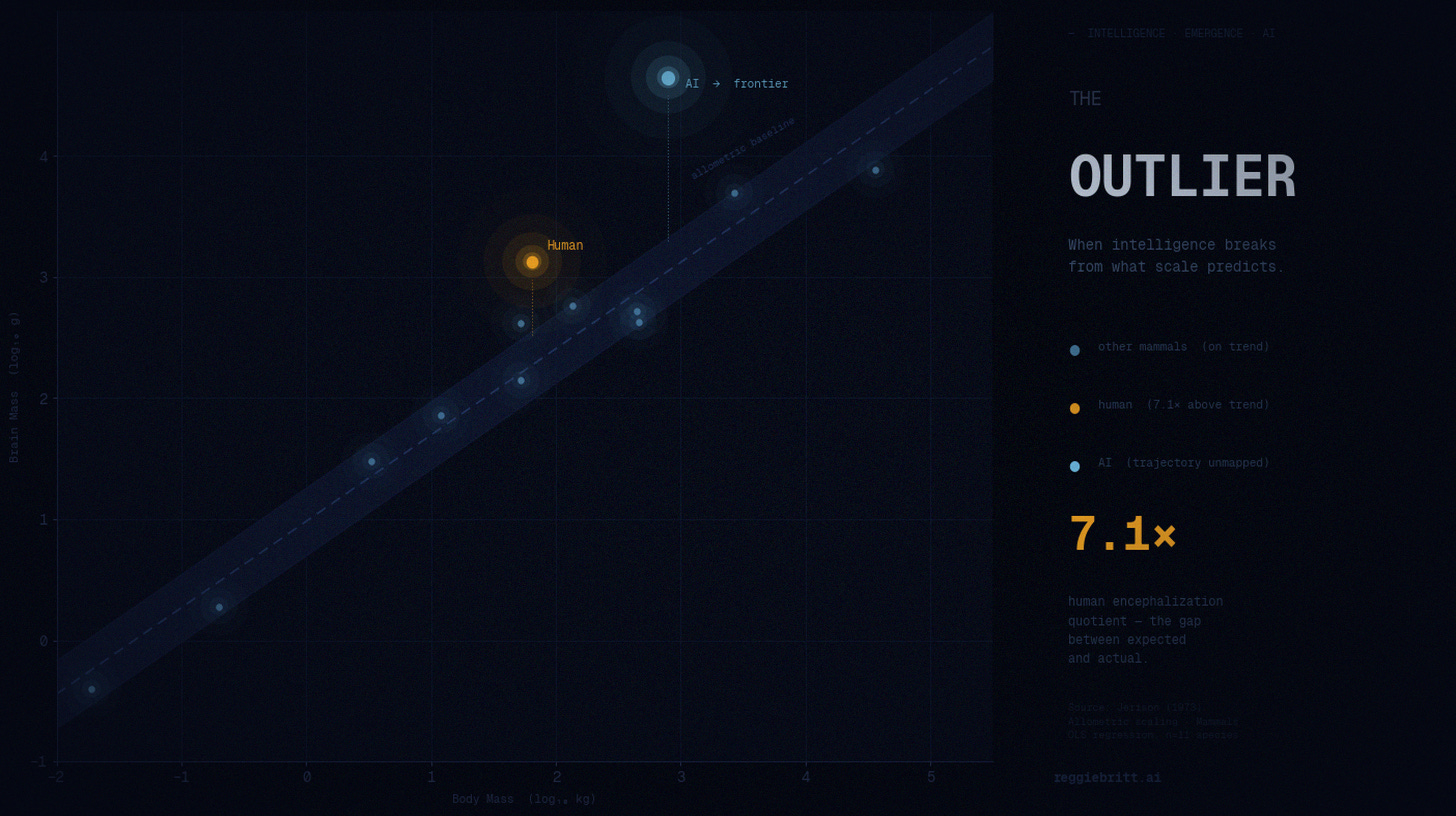

A few days ago I was watching Karen Hao’s interview on Diary of a CEO. She was on screen talking, and behind her a chart appeared — a log-log scatter plot of brain mass versus body mass across mammal species.

It’s a beautiful chart. Clean lines. Three distinct curves. Mammals in general. Non-human primates. And then the hominids — breaking sharply upward from the pack, steeper slope, different trajectory entirely.

The argument embedded in that chart is the scientific permission structure for the entire AI race.

Ilya Sutskever, co-founder and former chief scientist at OpenAI, used it to make a single, sweeping claim: at some point in evolutionary history, hominids crossed a threshold. Something qualitatively different emerged — language, abstraction, civilization. The jump wasn’t incremental. It was a phase change.

His inference for AI: the same thing can happen with compute. Scale a neural network past the right threshold and you don’t just get a bigger version of what you had. You get something new.

That chart — that analogy — is why hundreds of billions of dollars are flowing into data centers right now. It’s why nuclear plants are being reopened. It’s why the race feels existential to the people running it.

I’ve been sitting with that chart for a few days. And the more I sit with it, the more one question keeps surfacing.

What exactly are we racing toward?

Sam Altman, when talking to Congress: AGI will cure cancer, solve climate change, end poverty.

Sam Altman, when talking to consumers: the most amazing digital assistant you’ll ever have.

Sam Altman, in the investment agreement with Microsoft: a system that generates $100 billion in revenue.

Sam Altman on OpenAI’s website: “highly autonomous systems that outperform humans in most economically valuable work.”

These are not different descriptions of the same thing. They are different things. Calibrated for different audiences. Deployed to mobilize whoever needs to be mobilized next — regulators, investors, employees, governments.

Karen Hao spent seven years and 300 interviews documenting this pattern. Her conclusion: AGI is not a destination. It’s a mobilization tool.

And the builders themselves aren’t hiding it. Sam Altman recently called AGI “not a super useful term” — this from the man raising billions in its name. Andrej Karpathy, who built core systems at OpenAI before leaving, puts it a decade out. Dario Amodei at Anthropic says 2026 or 2027 but lists data exhaustion, compute limits, and geopolitical disruption as real risks that could derail everything.

Then there’s Fei-Fei Li — the woman who helped build the ImageNet dataset that kicked off the deep learning era, one of the most credentialed AI scientists alive. Her position:

“I frankly don’t even know what AGI means. People say you know it when you see it. I guess I haven’t seen it.”

The crack in the foundation

Here’s what made Ilya’s brain chart so persuasive: it looked like evidence.

Biological precedent. Measurable scaling. A documented inflection point. If nature did it once, compute can do it again.

But there are two problems that the skeptics keep returning to.

The first is the one Hao identified at the very beginning of her research. When John McCarthy named the discipline “Artificial Intelligence” in 1956, colleagues warned him that pegging the field to recreating human intelligence was dangerous — because there is no scientific consensus on what human intelligence is. No definition from psychology, biology, or neurology. The destination has never been defined. You can’t measure when you’ve arrived at a place no one can describe.

The second is mechanical. The hominid brain scaled over millions of years through evolutionary pressure — toward survival fitness, not raw compute. Neural networks scale through gradient descent on training data. The chart looks similar. The underlying physics may have nothing in common.

Tim Dettmers, a machine learning researcher, frames it even more bluntly: scaling now requires exponential cost for linear returns. GPU improvements are hitting physical limits. The transformer architecture is near optimal. The wall isn’t philosophical — it’s thermodynamic.

What the compression signal actually tells us

Here’s what I find most instructive about this entire debate.

As recently as 2020, professional forecasters put the median estimate for AGI at 50 years away. Today that same group averages a 50% probability by 2033. Thirteen years, not fifty.

Most people read that as confirmation that AGI is coming fast. I read it differently.

What compressed wasn’t the technology — it was expert confidence. And expert confidence compressed because the definition shifted. The goalposts moved closer not because we solved the hard problems, but because we redefined what “solved” means.

That’s the signal. Not the date.

The question that actually matters for your organization

I’m not writing this to tell you AGI is fake or that the technology isn’t extraordinary. It is. The rate of capability improvement over the past three years is genuinely unprecedented. Every conversation I’m having right now — with technologists, executives, builders — circles back to it.

But I’ve watched leaders freeze at this exact moment — waiting for definitional clarity before they move. Waiting to know whether AGI is five years away or fifteen. Waiting for the race to resolve before they decide how to position themselves inside it.

That is the wrong frame. And there’s a reason it’s getting more wrong by the month.

The most credible voices in the field aren’t just debating when AGI arrives. They’re debating whether the systems are already improving themselves faster than any governance response can form. Eric Schmidt, former CEO of Google, calls it the recursive self-improvement asymptote — the point at which AI learns on its own without human instruction. He sees it as a threshold still approaching, maybe two to four years out, and treats it as the moment that demands an immediate and serious regulatory response.

Elon Musk, at the same conference two days later, said it differently: humans are gradually getting less and less in the loop. Every successive model is built by the one before it. It’s happening — just not yet fully automated.

Anthropic’s own researchers put it more plainly still: recursive self-improvement in the broadest sense is not a future phenomenon. It is a present one. Seventy to ninety percent of code for their next models is now written by Claude.

The finish line isn’t just undefined. The rate of travel toward it is no longer entirely ours to set.

Which makes waiting for clarity even more dangerous than it looks.

The finish line of the AGI race is not your problem. The capabilities that exist right now — agents that can reason, draft, analyze, synthesize, act — are sufficient to fundamentally change how your organization operates. Not in theory. In practice. Today.

The organizations that will win this decade are not the ones that picked the right timeline. They’re the ones that built the capacity to run — regardless of where the finish line turns out to be.

Ilya’s brain chart is a beautiful argument. It may even be right.

But your competitive advantage doesn’t depend on whether the hominid analogy holds.

It depends on whether your organization is ready to use what’s already in the room.

But there’s a deeper question underneath the finish line problem. And that one’s harder.

Reggie Britt is a technologist and executive who has spent decades at the intersection of enterprise systems, consumer finance, and emerging technology. He writes about AI, organizational readiness, and what it actually means to lead through transformation.

If this resonated, share it with someone who’s been waiting for the AI story to get clearer before they move. The clarity isn’t coming. The readiness can.