The Agentic Jevons Trap

Why AI efficiency gains are accelerating the very risks they claim to solve

The February jobs report landed like a data point that didn’t know what story it was supposed to tell.

92,000 jobs lost. Forecasters expected gains of 59,000. A miss of 151,000 — in a single month. The coverage split immediately: some called it an AI displacement signal, others attributed it to federal workforce cuts and macro noise. The debate about which story is correct misses the more important point.

Both can be true. And if both are true, the mechanism underneath them is the same.

The Original Trap

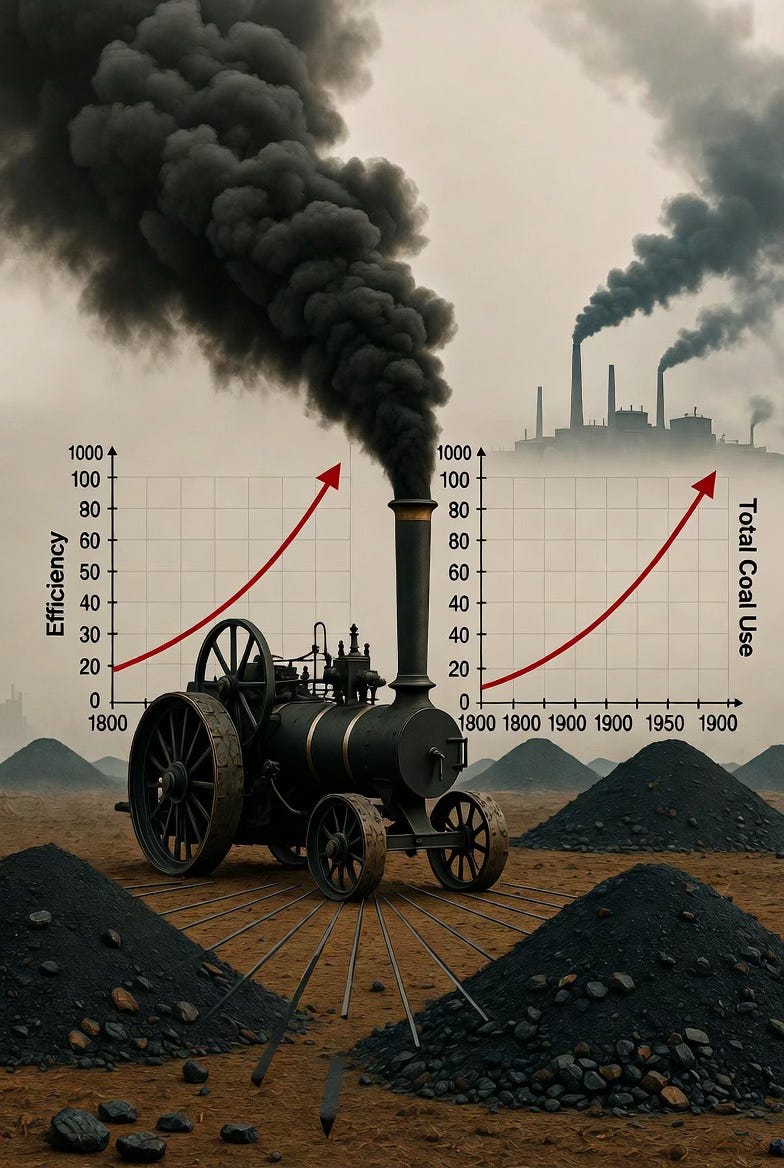

In 1865, the English economist William Stanley Jevons observed something that shouldn’t have been possible. The steam engine had just become dramatically more efficient — burning far less coal to produce the same output. By every intuitive measure, coal consumption should have declined.

It accelerated.

Jevons concluded that efficiency gains in energy use don’t reduce consumption. They reduce the cost of consumption, which expands the range of applications that become economically viable, which increases total demand. The more efficiently you use a resource, the more of it you use.

This became Jevons’ Paradox: technological efficiency in resource use tends to increase, not decrease, the overall rate of that resource’s consumption.

For 160 years, the paradox was primarily an energy and environmental economics problem. Then agentic AI arrived — and the paradox found a new host.

The Agentic Reframe

The resource is no longer coal. It is organizational capacity — the cognitive, operational, and governance bandwidth that organizations use to coordinate work.

The efficiency claim of agentic AI is real. Agents automate workflows, compress decision cycles, eliminate coordination overhead. A process that required ten human touchpoints can be reduced to two. The math looks compelling.

But the Jevons dynamic is already running. When you reduce the marginal cost of deploying AI agents, the number of workflows organizations attempt to automate expands. The ambition scales with the capability. The surface area of exposure — to failure, to unintended consequences, to compounding risk — grows faster than the governance infrastructure to manage it.

This is not a future projection. The evidence is datable.

CENTCOM: Governance Void at T=0

In the same period the February jobs report landed, a separate signal confirmed the failure mode on the adoption side.

CENTCOM deployed AI into operational workflows on the same day the capability became available — before governance policy had been written, before oversight structures had been established, before the organization had built the infrastructure to sustain what it was deploying.

The restraint mechanism that was supposed to slow deployment until governance caught up collapsed faster than it had been installed. Capability deployment outpaced governance in hours.

This is the Jevons dynamic at the organizational scale: the efficiency of deployment — the low friction of standing up an AI agent — expands the rate at which organizations attempt to deploy them. The governance infrastructure required to sustain those deployments cannot build at the same speed. The gap between what organizations can deploy and what they can govern widens with every capability release.

Policy declarations are not restraint mechanisms. They are documents. The capability moves faster.

SWE-CI: Governance Void at T+8 Months

CENTCOM is the governance failure at the moment of adoption. A research paper published on March 4, 2026 documents the governance failure that comes later.

Researchers from Alibaba Group and Sun Yat-sen University built the first AI coding benchmark measured not on a snapshot test — agent receives a problem, produces a solution — but on a full production evolution timeline. 100 tasks across real codebases. Each task spanning an average of 233 days and 71 consecutive commits. Agents were evaluated not just on whether they solved the immediate problem, but on whether the code they produced could sustain the codebase through months of continued evolution.

The headline finding: most models achieve a zero-regression rate below 0.25. In other words, in more than 75% of cases, AI agents that pass standard coding benchmarks introduce regressions when they maintain real production systems over 8-month timelines.

An agent that hard-codes a brittle fix and one that writes clean, extensible code may both pass the same test suite. Their difference becomes visible only when the codebase must evolve — when new requirements arrive, interfaces change, and modules must be extended.

This is not a capability gap. It is a governance gap. The organizations deploying AI agents at speed, on the strength of short-horizon benchmark performance, are not building the oversight infrastructure to detect degradation as it accumulates. They are accelerating a dynamic that already existed in human-maintained code — and compounding it.

The Anthropic Signal

The Anthropic Economic Index, published in early March 2026, provided the labor market anchoring for what the Jevons dynamic predicts.

The paper found AI most heavily used in automation of existing tasks rather than augmentation or new task creation. The composition of displacement is uneven: computer and math occupations show the highest theoretical capability exposure (94%) but the lowest observed AI coverage (33%). The gap between what AI can theoretically do and what organizations have actually integrated is the readiness gap — and the Jevons dynamic is running in both directions simultaneously.

Organizations that automate efficiently create pressure to automate more. Organizations that haven’t automated yet face competitive exposure that accelerates adoption without governance. Both trajectories compound the same underlying problem: the efficiency of deployment has outrun the capacity to govern what gets deployed.

The February jobs report is, in this frame, not primarily a story about AI replacing workers. It is a story about organizations that optimized for deployment speed without building the readiness infrastructure to sustain it. When the capability scales faster than the organization can absorb, the excess goes somewhere. Sometimes it goes to regressions in production code. Sometimes it goes to workforce restructuring that moves faster than the institutional knowledge can be transferred. Sometimes it goes to governance vacuums that the next capability release will find already open.

The Paradox in Full

The Agentic Jevons Trap is not the observation that AI will increase demand for labor in the long run — though it might. It is the observation that the efficiency of AI capability deployment is increasing the rate at which organizations consume governance capacity, oversight infrastructure, and organizational readiness.

Every capability release that lowers the cost of deploying an agent expands the number of workflows organizations attempt to automate. Every expansion in automated workflows increases the surface area of exposure to the failure modes SWE-CI and CENTCOM document. Every governance vacuum the capability outpaces becomes a liability that compounds over the 233-day production timeline no benchmark was measuring until now.

The paradox is structural. It does not resolve by deploying better AI. It resolves only by building the organizational readiness infrastructure that can govern what the capability enables.

That is a different kind of work. It is the work nobody is selling.

The Counter-Paradox

Jevons himself did not have an answer to his paradox. The efficiency gain was real. The consumption increase was real. The gap between them was a structural feature of how markets respond to cost reduction.

The counter-paradox for the agentic version is governance as a restraint mechanism — not as a document, not as a policy declaration, but as infrastructure. The organizations that build oversight architecture before they need it, that establish the readiness primitives before the capability arrives, that treat governance as a design constraint rather than a compliance checkbox — those organizations close the gap between deployment speed and absorption capacity.

The window for that work is open. It is not wide.

The February jobs report is not a warning from the future. It is a reading from organizations that already ran the experiment. CENTCOM and the SWE-CI data bracket the failure arc: governance void at adoption, governance void at maintenance, compounding across every timeline in between.

The technology has arrived. The question is whether the organization has.